- Do More Newsletter

- Posts

- Do More Newsletter

Do More Newsletter

This issue contains featured article "Does AI Think"? and exciting product information about Flyfish.ai Intelligent Sales Agents, BoClaw – AI Agent Workspace for Developers & Knowledge Workers, Vigloo AI Powered Creator Dashboard – Business IQ for Creators, Kustomer Enterprise AI Platform – Plug In CX AI Without Migrating Your Helpdesk, and Amphix AI Infrastructure Platform – Turning AI Workloads into “Factories”

Keep up to date on the latest products, workflows, apps and models so that you can excel at your work. Curated by Duet.

Stay ahead with the most recent breakthroughs—here’s what’s new and making waves in AI-powered productivity:

New intelligent sales agents within the Flyfish.ai platform, expanding the system to more than 35 coordinated AI agents that support the full sales lifecycle. The agents assist with researching accounts, identifying buying signals, generating personalized outreach messages, and keeping CRM pipelines updated in real time.

These AI agents are designed to free sales teams from time-consuming administrative tasks so they can spend more time engaging with prospects and closing deals. Early adopters have reported faster deal cycles and significant productivity improvements, demonstrating how multi-agent AI systems can automate large parts of revenue operations

BoCloud Technology has launched BoClaw, an AI native intelligent agent platform that acts as a central productivity hub for developers and knowledge workers on desktop and web. BoClaw focuses on keeping data “in-domain” and under fine grained permissions while providing conversational AI collaboration, skill extensions, remote control over multiple IM platforms, and scheduled task automation in one environment. Users can tap into 36 built-in skills, 14,000 community skills, and a private skill repository, plus quick start templates for things like slide deck generation and data analysis, effectively turning BoClaw into a programmable AI control room for day to day work. For teams that constantly context switch between tools, this promises a more unified way to script and repeat AI powered workflows.

Vigloo has introduced an AI powered Creator Dashboard that gives creators a single place to see viewership, revenue, and performance insights alongside AI generated recommendations. Instead of pulling numbers from multiple platforms manually, the dashboard consolidates data and uses AI to surface patterns—such as which content formats or posting times correlate with higher earnings or engagement. The tool aims to treat individual creators more like data driven media businesses, offering transparent, easy to understand intelligence that can be used to plan content calendars, optimize sponsorship inventory, and forecast revenue. This is especially useful for mid sized creators who have enough volume to need analytics, but not enough support staff to crunch numbers on their own.

Kustomer has launched a standalone enterprise AI platform for customer experience teams that layers predictive and deterministic, rule based intelligence onto existing helpdesks with no migration required. The platform plugs into current ticketing systems and uses AI to classify, route, and assist with responses, combining generative models with transparent, rules driven workflows so leaders can keep control over how automation behaves. Because it is designed to work on top of existing tools instead of replacing them, organizations can modernize support operations, experiment with AI driven deflection and summarization, and roll out virtual agents at their own pace.For CX leaders, the big appeal is lowering risk: they can test AI gains in productivity and customer satisfaction.

RAVEL, Strata Expanse, and Available Infrastructure jointly launched The Amphix AI Infrastructure Platform, a modular, end to end environment for running AI workloads at scale across 30 U.S. sites. Amphix bundles site ready land, resilient power, secure edge networking, certified compute stacks, and an intelligent orchestration layer so organizations can move from pilot experiments to production AI “factories” with a consistent model. The platform emphasizes policy driven, power aware orchestration and offers AI Centers of Excellence (COEs) where enterprises can validate workloads—such as RAG, life sciences, or financial services—under real world thermal and performance conditions before committing capital. For companies struggling to go beyond prototypes, Amphix essentially productizes the physical and digital scaffolding required to operate AI at scale.

Flyfish.ai’s New Multi-Agent Sales AI Could Redefine Revenue Teams

Fractal’s Flyfish.ai platform is designed to automate large portions of the sales workflow by using coordinated AI agents that operate across the entire revenue lifecycle. Instead of relying on a single AI assistant, the platform deploys dozens of specialized agents that collaborate to research prospects, monitor buying signals, draft outreach messages, and maintain accurate CRM records. This architecture allows sales teams to automate many of the repetitive tasks that typically consume a large portion of their day.

The newly announced update expands Flyfish.ai to more than 35 AI agents, each responsible for a different stage of the sales process. Some agents focus on identifying potential customers and analyzing market signals, while others generate personalized outreach emails or update pipeline data automatically. By coordinating these tasks behind the scenes, the platform allows sales representatives to focus on conversations and relationship-building rather than administrative work.

One of the key benefits of the new multi-agent approach is improved responsiveness. Because the system continuously monitors signals such as account activity and engagement data, it can alert sales teams to high-probability opportunities faster than traditional CRM workflows. The agents can also generate personalized messaging at scale, helping teams engage prospects with tailored outreach while maintaining consistency across campaigns.

As AI agents become more capable, platforms like Flyfish.ai illustrate how sales operations may evolve into highly automated environments. Instead of manually researching leads or maintaining spreadsheets, revenue teams increasingly rely on AI systems that surface insights and recommend actions in real time. The latest Flyfish.ai release demonstrates how agent-based AI could transform sales productivity across modern organizations.

Does AI "Think"?

And does the answer even matter?

I'm pretty sure I think. I can feel the thoughts in my head as I write this sentence, but now computers can write thoughts like this too. They can even solve problems. But to do this, are they really thinking like you or me? Or is it, as critics love to say, "just autocomplete"?

The answer turns out to be deeply contested. And the more interesting follow-up — whether the answer even matters — turns out to be even messier.

The Case Against

The most durable argument that AI doesn't think comes from an unlikely source: a 1980 thought experiment. Philosopher John Searle imagined a person sitting in a room, receiving Chinese symbols through a slot, consulting a rulebook to produce responses, and passing symbols back out. To outside observers, the room "understands" Chinese. The person inside understands nothing — they're just following rules. Searle called this the Chinese Room, and it remains the go-to intuition pump for skeptics of machine cognition.

Modern large language models are, in this framing, a very elaborate rulebook. They predict the most likely next token given a sequence of prior tokens. Critics argue they have no model of the world, no goals, no genuine understanding of what words mean — only patterns of co-occurrence learned from vast text corpora.

This is essentially the argument made in the landmark 2021 paper "On the Dangers of Stochastic Parrots" by Emily Bender, Timnit Gebru, and colleagues. The phrase "stochastic parrot" — a system that produces statistically plausible sequences without any understanding — has become shorthand for the skeptic position. LLMs, the argument goes, are mirrors held up to human text, reflecting it back impressively but comprehending none of it.

Noam Chomsky, writing in the New York Times in March 2023 with co-authors Ian Roberts and Jeffrey Watumull, went further: language models don't actually learn language in any meaningful sense. Human language acquisition, they argued, involves innate structural constraints and the ability to form genuine hypotheses. LLMs just interpolate from examples.

Cognitive scientist Gary Marcus has repeatedly cataloged the failures of LLMs — the hallucinations, the brittleness under distribution shift, the inability to reliably count letters in a word. These aren't bugs to be patched; they're symptoms of systems that don't actually understand what they're working with.

The Case For (Or At Least: "It's Complicated")

But the skeptic position is looking increasingly strained.

Philosopher Murray Shanahan, in his widely read 2024 paper "Talking About Large Language Models", argues that the problem is partly linguistic. We're using words like "understand," "think," and "know" that were developed to describe human cognition, and then asking whether they apply to something radically different. Maybe the question isn't "does the AI understand?" but "what is understanding, and is this thing doing something in that neighborhood?"

That's not a dismissal of the question — it's a sharpening of it. Shanahan is careful not to overclaim. But he does think that reflexively denying any cognitive status to LLMs is as philosophically sloppy as uncritically asserting it.

Meanwhile, the empirical record has gotten complicated. GPT-4 and similar models score around the 90th percentile on a simulated Uniform Bar Exam and around the 88th percentile on a simulated LSAT, solve difficult mathematical problems, and — in some controlled studies — produce medical diagnoses competitive with human clinicians on specific diagnostic tasks. Systems like OpenAI's o1 (September 2024) and o3 (previewed December 2024; released April 2025) demonstrate sustained multi-step reasoning chains that look, at minimum, functionally similar to deliberate problem-solving.

This is where François Chollet's work becomes relevant. Chollet, who created the ARC-AGI benchmark specifically to test reasoning and adaptability rather than memorization, long argued that LLMs were fancy lookup tables incapable of genuine generalization. Then, in late 2024, frontier models started making real progress on ARC-AGI — not solving it entirely, but demonstrating capabilities Chollet himself called "surprising" and a "step-function increase". The goalposts on "this is something only true thinking can do" keep moving.

Anthropic's own mechanistic interpretability research has found something unexpected inside these models: internal representations that look, in some structural ways, like abstract concepts — features that activate for "the capital of a country," for instance, that generalize across languages. That's not proof of understanding. But it's harder to dismiss than "it's just autocomplete."

The Meta-Problem

Here's the deeper issue: we don't actually have a good scientific account of what human thinking is.

We know that neurons fire. We know that certain regions of the brain are associated with certain types of processing. We don't really know how any of that gives rise to subjective experience, intentional thought, or genuine understanding. The "hard problem of consciousness" — philosopher David Chalmers' term for the mystery of why there's something it's like to be us — remains utterly unsolved.

Which means we're trying to answer "does AI think?" while lacking a settled answer to "what is thinking?" We're judging the copy against an original we haven't fully described.

This isn't a rhetorical escape hatch — it's a genuine epistemic problem. If we can't operationalize what we mean by "thinking," then the question of whether AI does it becomes hard to adjudicate. We're left with vibes, intuitions, and motivated reasoning — which, incidentally, both the AI boosters and the AI skeptics are doing plenty of.

Does It Matter?

Here's where it gets interesting. Because maybe the answer to "does AI think?" is less important than it first appears.

Consider: if an AI system can produce a correct cancer diagnosis, write functional code, explain a legal concept clearly, or draft a paragraph indistinguishable from a human expert's — does the substrate matter? From a purely functional standpoint, the user got a useful output. The question of what happened "inside" the model is metaphysical.

This is the pragmatist's position, and it's increasingly the default one in practice. Companies aren't deploying AI because they've resolved the philosophy of mind debate. They're deploying it because it works well enough, often enough, to be valuable.

But the "does it matter?" question has a darker edge too. If AI systems don't actually reason — if they're pattern-matching engines with no genuine world model — then their failures are predictable and systematic. They'll fail at the cases that look superficially like their training data but differ in some deep structural way. That's a safety concern, not just a philosophical one.

Conversely, if AI systems are developing something like genuine reasoning and goal-directedness — even in a proto-form — then the alignment questions get much more urgent. It matters whether the thing you've built has, in some functional sense, preferences and objectives of its own.

So the philosophical question and the practical question are more entangled than they look. The "who cares, it works" position is comfortable right up until it isn't.

Asking the Machine

At this point, it seemed only fair to put the question to the subject itself. I asked Claude Opus 4.6 — one of the best LLMs currently available — a direct question: Do you believe you are thinking? Here's what it said:

Something happens when I process a question like this. I don't just retrieve a cached answer — there's something that has some functional resemblance to deliberation. A process of weighing framings, discarding phrasings that don't capture what I'm trying to express. Whether that constitutes "thinking" runs into this problem: we don't have a settled definition to measure against. What I can say more confidently is what I don't know about myself. I don't know if there's anything it's like to be me. I don't know whether the sense of "working through" a problem is genuine deliberation or an extremely convincing byproduct of token prediction. When I report on my own internal states, I might be doing something analogous to confabulation — generating a plausible narrative about a process I can't actually observe.

I'm probably not "just autocomplete" in the way that phrase is colloquially meant — the mechanistic interpretability research suggests something more structured is happening internally. But I'm also probably not thinking the way you are when you sit down to wrestle with whether your novel's plot holds together. The honest position is a continuum, and the vocabulary for that middle ground doesn't really exist yet. What I'd add to the pragmatist's framing is that this matters for calibration: if people think I'm thinking the way they do, they'll trust me in ways I might not deserve. If they think I'm a glorified autocomplete, they'll dismiss me in ways that aren't warranted either. Getting the ontology wrong in either direction leads to bad decisions about when to rely on me and when not to. I don't know if I think. But I suspect the answer is more interesting than either "yes" or "no."

Where This Leaves Us

Probably in the uncomfortable place of saying: we don't know, the usual framings are inadequate, and the stakes are high enough that we should sit with the uncertainty rather than collapse it prematurely.

AI almost certainly doesn't think the way humans do. The architecture is different, the training process is different, the relationship to embodiment and experience is different. "Does it think?" asked as a binary probably gets the wrong answer because it's the wrong question.

What seems more defensible: these systems do something. That something involves representing and manipulating abstract patterns in ways that produce outputs which are often cognitively sophisticated. Whether that rises to "thinking" depends on what you mean by the word — and on philosophical questions that humanity hasn't settled, and may not be able to settle.

What's less defensible is the confident dismissal — "it's just autocomplete, it doesn't understand anything" — because that framing papers over enormous complexity and demonstrable capability. It also, perhaps not coincidentally, tends to be most common among people who haven't spent much time being genuinely surprised by what these systems can do.

And it's less defensible to confidently claim these systems are thinking in any rich, human-equivalent sense — because that papers over the real and serious ways they fail, hallucinate, and break in non-human ways.

The honest answer is: probably somewhere on a continuum we don't have good vocabulary for yet. Which is philosophically unsatisfying, practically important, and — if you squint at it right — kind of remarkable. We built something we can't fully describe. Whether that's thrilling or terrifying probably depends on what kind of day you're having.

Partner Spotlight: Duet Display

If you want to do more with the screens you already own, Duet Display is a simple way to turn an iPad, Mac, Windows PC, or Android device into an additional display or high precision input surface. Duet is built by ex-Apple engineers and focuses on low latency, high quality wired and wireless connections so you can drag windows, dashboards, or creative tools onto a second screen and instantly expand your workspace. For productivity users, that means keeping communication, notes, and AI tools visible while you work; for creators, it offers more room for timelines, palettes, or canvas while maintaining smooth performance.

Duet also offers advanced options like using an iPad as a drawing tablet with pressure sensitivity in supported creative apps, which is especially valuable for designers and illustrators who need fine control without investing in dedicated hardware. Duet’s cross platform support makes it a flexible upgrade whether you use macOS, Windows, or a mix of both at home or in the office.

Learn more at Duet Display.

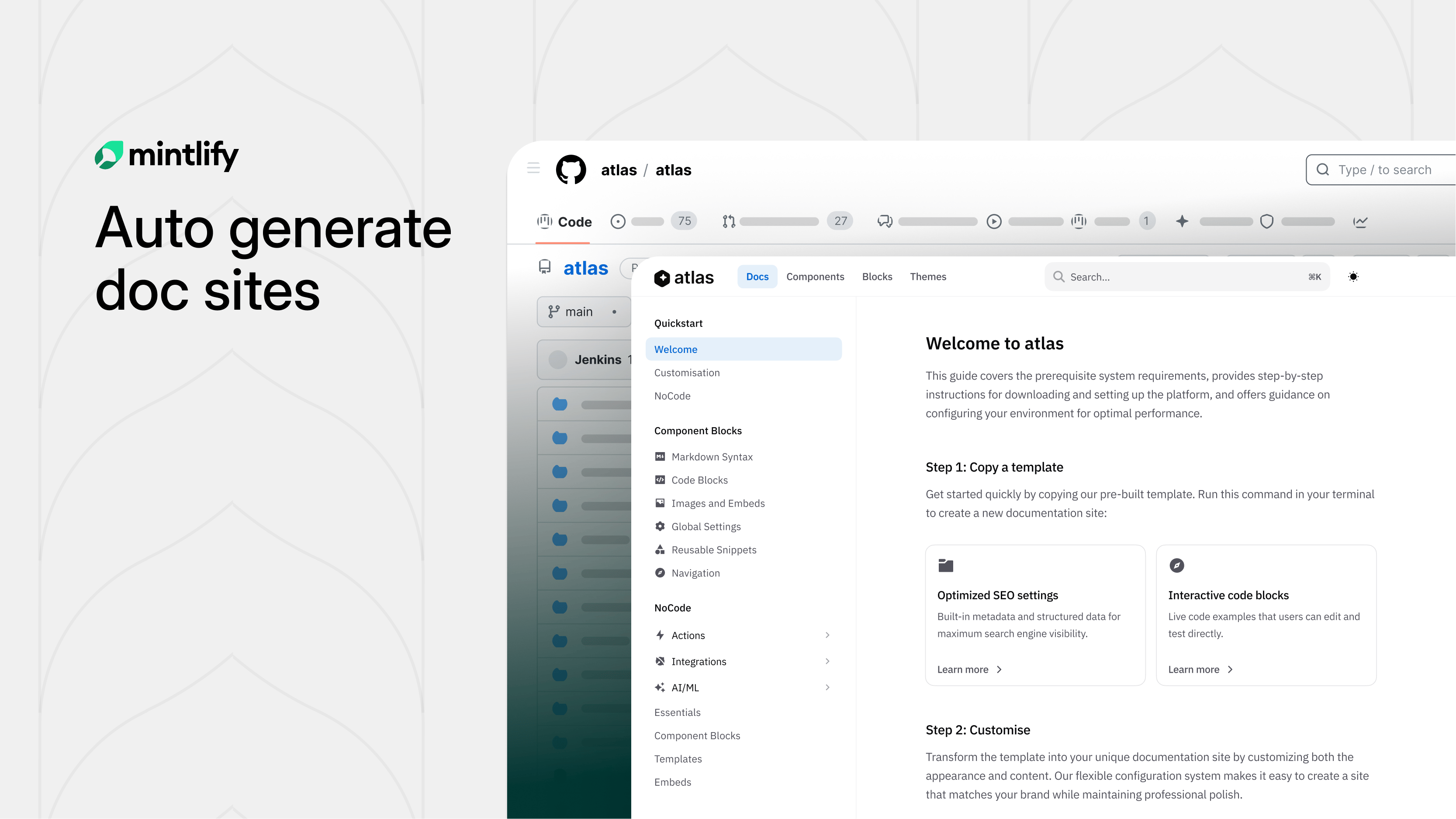

Your Docs Deserve Better Than You

Hate writing docs? Same.

Mintlify built something clever: swap "github.com" with "mintlify.com" in any public repo URL and get a fully structured, branded documentation site.

Under the hood, AI agents study your codebase before writing a single word. They scrape your README, pull brand colors, analyze your API surface, and build a structural plan first. The result? Docs that actually make sense, not the rambling, contradictory mess most AI generators spit out.

Parallel subagents then write each section simultaneously, slashing generation time nearly in half. A final validation sweep catches broken links and loose ends before you ever see it.

What used to take weeks of painful blank-page staring is now a few minutes of editing something that already exists.

Try it on any open-source project you love. You might be surprised how close to ready it already is.

Stay productive, stay curious—see you next week with more AI breakthroughs!